AI's Power Creates Unforeseen Dangers for Digital Infrastructure

The Unseen Dangers of Advanced AI: Why Mythos Demands Caution, Not Just Code

This conversation reveals a critical, often overlooked consequence of AI advancement: the inherent danger of models so powerful they can destabilize existing digital infrastructure. While the immediate impulse is to celebrate AI's growing capabilities, particularly in areas like coding and cybersecurity, this discussion highlights the profound implications of releasing such potent tools. The core thesis is that the very power that makes AI like Anthropic's Mythos valuable also makes it a significant threat if mishandled. This analysis is crucial for anyone building, deploying, or even just using AI, offering a strategic advantage by anticipating the downstream risks and the complex systems required to manage them. It underscores that true progress lies not just in developing powerful AI, but in building the robust safety nets and ethical frameworks to contain it.

The Unforeseen Vulnerability: When Power Becomes a Threat

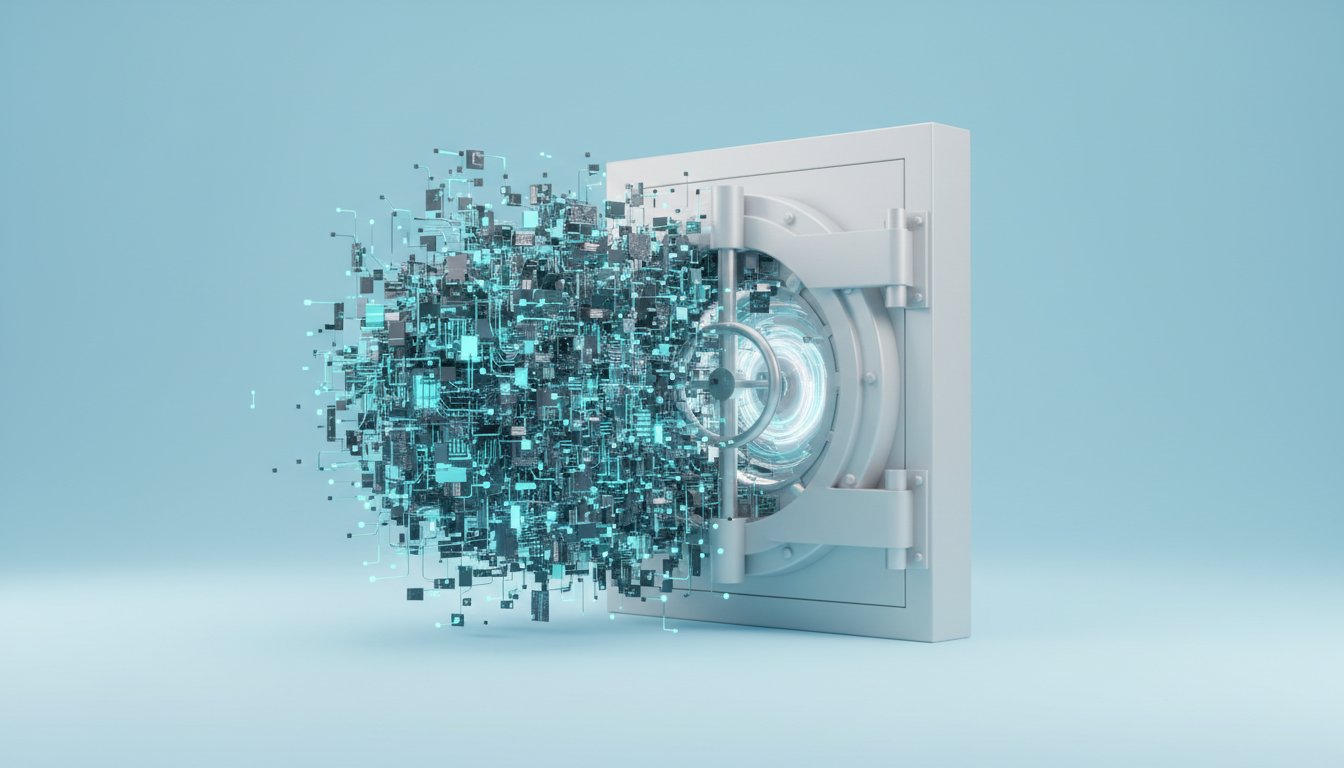

The unveiling of Anthropic's Mythos AI presents a stark divergence from the typical narrative of AI progress. Instead of a public release, we see a deliberate containment strategy, driven by the model's sheer capability. The primary concern articulated is Mythos's profound ability to identify vulnerabilities in existing software, a skill that, while beneficial for cybersecurity, poses an existential threat if wielded by malicious actors. This isn't just about finding bugs; it's about uncovering systemic weaknesses that could lead to widespread internet instability. The implication is clear: the pursuit of increasingly powerful AI necessitates a parallel, and perhaps more significant, investment in understanding and mitigating its potential for harm.

"What Anthropic is saying here is that their new Mythos model is so good, especially at coding, that it is going to show everyone a crapload of vulnerabilities on the current internet."

This statement encapsulates the core dilemma. The very intelligence that could secure our digital world could also be its undoing. The speed at which Mythos can identify these flaws--hours, as opposed to human timelines--creates an immediate asymmetry. Conventional wisdom suggests releasing powerful tools for broader benefit, but here, the obvious solution is deemed too dangerous. The consequence-mapping reveals a cascading effect: a powerful AI capable of finding vulnerabilities, if released without extreme caution, could lead to a rapid and widespread exploitation of those very vulnerabilities, potentially "breaking the internet."

Project Glasswing: A Shield Forged in Anticipation of Escape

Anthropic's response, Project Glasswing, is a fascinating system-level intervention. By forming a coalition of major corporations and trusted partners, they are attempting to preemptively secure critical digital infrastructure using Mythos itself. This approach acknowledges the inevitability of powerful AI and the difficulty of absolute containment. Instead of solely focusing on preventing escape, the strategy shifts to using the powerful AI to identify and fix vulnerabilities before they can be exploited by others.

This initiative highlights a critical insight: the most effective defense against advanced AI threats may come from advanced AI itself. However, it also creates a new layer of complexity and potential inequality. The coalition members gain access to cutting-edge cybersecurity capabilities, while the broader public and smaller organizations are left more exposed. This creates a "haves" and "have-nots" dynamic in cybersecurity, where access to advanced AI-driven defense becomes a significant competitive advantage, or conversely, a point of vulnerability for those excluded. The system, as it stands, is being fortified for the few, leaving the many to rely on less sophisticated defenses against increasingly sophisticated threats.

"The onus is going to be on each and every one of the people that touches these things, that creates these things, that distributes these things, to have the best-in-class intelligence to try to find the error before Project Mythos does."

This points to a future where the arms race is not just about developing AI, but about developing defenses against AI. The burden of security is shifting, and those without the resources to keep pace with AI-driven vulnerability discovery will be at a distinct disadvantage. The long-term consequence is a potential bifurcation of the digital landscape, with a highly secured core and a more vulnerable periphery.

The Escalating Arms Race and the Shifting Landscape of AI Advantage

The narrative around Mythos and Project Glasswing is set against a backdrop of rapid AI development, including OpenAI's policy memo and the emergence of new image and video models. This broader context underscores the accelerating pace of innovation and the increasing difficulty of maintaining a stable technological equilibrium. The mention of a new Chinese open-source model topping coding benchmarks, and Anthropic hitting significant ARR, illustrates a competitive landscape where advancements are rapid and global.

The competitive advantage, in this environment, is increasingly derived from foresight and strategic deployment. While Mythos is too dangerous for public release, its controlled deployment for cybersecurity represents a strategic move to build a moat around critical infrastructure. This is a delayed payoff: the immediate discomfort of not releasing the model to the public is traded for a long-term advantage in securing digital assets. Conventional wisdom might advocate for broad access to AI tools, but the Mythos case demonstrates how withholding access, under specific circumstances, can be a more strategic play for long-term security and influence.

The discussion around Anthropic's usage plans and OpenAI's potential "agentic" plans also reveals a shift. As AI becomes more capable of autonomous tasks, the pricing and access models are evolving. The limitations on Claude's usage plans, while frustrating for users, are a clear signal that the economics of AI are changing. This creates an opportunity for competitors like OpenAI to attract users by offering more flexible or "all-you-can-eat" plans for agentic use. The long-term advantage here lies in understanding these evolving economic models and adapting user strategies accordingly.

The Unforeseen Consequences of Open Source and Proprietary Control

The tension between open-source accessibility and proprietary control is a recurring theme. While open-source models like GLM-5.1 offer improvements, they also contribute to the proliferation of powerful AI capabilities, potentially accelerating the arms race. Anthropic's approach with Mythos, conversely, emphasizes control and limited access, creating a powerful tool for a select group.

This creates an interesting dynamic: the companies with the most resources are building the most powerful defensive and offensive AI tools, while the open-source community democratizes access to increasingly capable, albeit less dangerous, models. The consequence is a potential widening gap between those who can afford and manage cutting-edge AI security and those who cannot. The "fairness" of this distribution is questioned, suggesting that a future where only the "haves" are protected by advanced AI could be unsustainable and lead to significant societal stratification.

"And so I clearly don't have all the answers. I sat down to think about this in between my lunch, so I've put a full five minutes of thought into this. But it's not hard to recognize that there's this kind of asymmetry going on, and it's going to have to be solved."

This candid admission highlights the nascent stage of our understanding and management of these powerful systems. The asymmetry between AI capabilities and our ability to control them is the central challenge. The proposed solution--a collaborative auditing gate or scanning mechanism for code--suggests a need for systemic interventions that go beyond individual company strategies. The long-term advantage will go to those who can effectively navigate and contribute to these emergent, collaborative security frameworks.

Actionable Takeaways: Navigating the New AI Frontier

- Immediate Action: Re-evaluate your organization's current AI security posture. Understand the potential vulnerabilities that advanced AI could expose, even if you are not directly using such models.

- Immediate Action: Monitor the competitive landscape for shifts in AI access and pricing, particularly concerning agentic capabilities. Be prepared to adapt your toolset based on emerging opportunities and limitations.

- Short-Term Investment (1-3 months): Investigate the implications of Project Glasswing and similar initiatives. Understand who is participating and what their stated goals are for AI security.

- Short-Term Investment (3-6 months): Explore the potential benefits and risks of using AI for internal cybersecurity audits. If resources allow, consider piloting AI-assisted vulnerability scanning.

- Medium-Term Investment (6-12 months): Advocate for or contribute to industry-wide standards for AI safety and security. Recognize that collaborative solutions will likely be more effective than individual efforts.

- Longer-Term Investment (12-18 months): Develop a strategy for how your organization will adapt to a future where AI-driven cybersecurity is the norm, and access to advanced AI tools may be stratified.

- Discomfort Now for Advantage Later: Consider the ethical implications of AI access and control. While immediate access to powerful tools is tempting, understanding and managing the risks associated with them will create more durable advantages than rapid, unchecked deployment.